The untapped potential of virtual sensors for digital twins

Digital twins are a computer modelled representation of something that exists in the real world.

To work, digital twins often need a vast array of sensors, providing real-world data from their physical twin. Still, it’s not always practical to add physical sensors as required.

But what if to build digital twins—you didn’t need to rely on physical sensors?

Virtual sensors offer a way to work around the constraints of digital twinning where additional physical sensors aren’t possible.

Digital twinning and virtual sensors have come a long way in the past five years, thanks to advances in a host of supporting technologies that bring insights and predictions to complex systems.

So, if you’re wondering:

a) What are digital twins?

b) What are virtual sensors?

c) How do virtual sensors work with digital twins? And…

d) What could digital twins do for my assets?

You’ve come to the right guide.

Read on and find out:

What is a digital twin?

A digital twin is a simulated interpretation of an “object”. That object could be a building, an aircraft, an electric car, a satellite or even a medical device inside a person. It could be any object so long as it’s something that “changes over time”.

It’s not static, this simulation, this twin. It’s dynamic, fed by information from sensors placed on and within the object. Data gathered is run through models to become a virtual representation of that object (even in real-time, if needed).

While there are numerous definitions of digital twins available, a digital twin must possess one specific element to be a digital twin. As Louise Wright and Stuart Davidson explain in How to tell the difference between a model and a digital twin:

“One of the key aspects of the parts listed above is that a digital twin has to be associated with an object that actually exists: a digital twin without a physical twin is a model.”

So, the twin must reflect an object that exists in the real world.

All of this is possible because of advances in IoT technology, 4G and 5G, and improvements in big data analytics. And you can combine all of this with artificial intelligence, through machine learning, to gain valuable insights during a system’s lifetime.

A digital twin doesn’t just have to be something you look at on a computer screen. You can also view it in an augmented reality (AR) or virtual reality (VR) environment.

What are the advantages of digital twins?

As digital twins are realistic, dynamic models of their real-world counterparts, we can try out different “what if” scenarios or monitor current use. Alternatively, you can use a twin to replay a sequence of actions to analyse what caused a fault.

There are general advantages to using digital twins and specific ones based on object and sector. But the primary benefits can include:

- Enhancing early-stage product or system development by tying prototypes with data modelled by a digital twin to inform design.

- Reducing maintenance costs through either reducing downtime or unnecessary parts purchasing by helping generate reliable predictions.

- Improving outcomes for fault fixing because you’ll have deeper insights on what led to something going awry in the first place.

- Optimising performance and reliability over the course of an object’s lifetime, helping to inform things like software updates and preventative maintenance.

- Gathering insights into usage, energy use, material flow and wear to inform strategic decisions about object sustainability.

- Predicting outcomes for the object to help inform decision making or feed into an automated response.

What you gain from digital twinning will depend on your use case and the goals you’re trying to achieve.

What about at scale?

If you’re concerned about scaling digital twins to cover many “assets”, then work on new mathematical models is helping to make this a reality. Work by Michael Kapteyn, of MIT, alongside others, has helped to create a:

“[…] [Unifying] mathematical representation of the relationship between a digital twin and its associated physical asset that was not specific to a particular application or use.”

The model moves digital twins away from the “one-off, highly specific digital twins” we’ve seen so far.

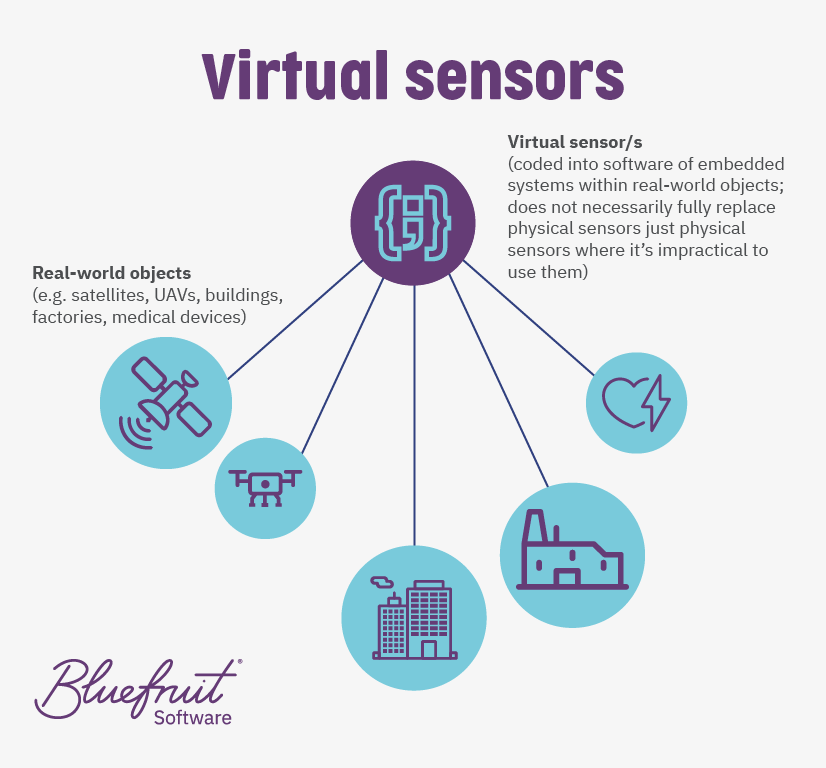

What is a virtual sensor?

A virtual sensor is a software component that infers the state of an object without direct access to a specific physical sensor.

Traditionally, virtual sensors rely on physically quantified data that’s combined with a mathematical model. A virtual sensor approximates what would have been picked up by a physical sensor had it been used, with its model based on past physical sensor readings.

Thanks to advances in machine learning and artificial intelligence, virtual sensors are now shifting to use data generated by an object’s operations or use. The AI is trained on an object’s data to understand varying conditions as part of software development.

Bluefruit Software’s research and development into edge AI have helped us build AI models that function as virtual sensors within embedded systems. Edge AI is where an AI runs on a device and isn’t necessarily connected to a network.

Why would you need virtual sensors?

There are times when using a physical sensor, or a high number of them is not practical. You might need virtual sensors:

- Where adding a physical sensor will get in the way of an object’s use, including the movement of materials, or could interfere with other signals.

- When the object is a low to ultra-low powered system and powering physical sensors is not practical.

- In aerospace, the addition of physical sensors could add too much weight to an object, affecting even launch, orbit, or travel for space-bound systems.

- When having the number of sensors needed would make the object too large for intended use, such as a pacemaker.

Cost is unlikely to be a barrier to using physical sensors. In general, the average price of IoT sensors (for instance) has dropped from $1.30 to $0.38 between 2004 to 2020.

Why you should consider edge AI-led virtual sensors for digital twinning

Depending on the changes within and around objects pushing you towards using digital twinning, you might be facing a situation where using all physical sensors could be a problem. Building capacity for digital twinning may seem unrealistic due to power, space or position issues.

But it doesn’t have to be unrealistic to build for digital twinning if you can start looking at where to substitute physical sensors with virtual ones.

To take this idea further, whatever the object involved: if you already must use non-AI virtual sensors, the AI-enabled ones will work too. So, edge AI-led virtual sensing makes sense.

Every electronic system in use will have elements of its operation that leave a trail that you could use to infer the changing state of that object. It means finding the right trail, the proper signal, to look at and then train an AI to understand that.

Virtual sensing in action

We are currently using the edge AI we developed in our R&D work (patent pending) to bring virtual sensing to a client product.

Adding physical sensors to this specific product would directly affect its operation. So, we’re using AI through machine learning to understand one of the signals this product leaves. First, it analyses the current variation (which is proportional to torque) in its brushless motor. Analysing this variation, we can infer the device’s operational states through the edge AI we’ve developed and apply it as a virtual sensor.

Do I have a system or device that will benefit from digital twinning?

Now is the time to be asking yourself if you have a system that could benefit from digital twinning.

If what you’re looking to build a digital twin of is something that exists and changes over time, then it is potentially a twinning candidate.

Consider the above alongside strategic decisions about:

- Design

- Operational states

- Automated decision making

- Sustainability

- User experience (UX)

- Improving outcomes

And it will help you identify if twinning will bring value.

Your decision may also be influenced by recent work in predictive digital twins at scale, which means that digital twins could also work with fleets of systems. System fleets could include drones to satellites to entire factory chains, autonomous land vehicles, and medical device implants.

Want to see where virtual sensors and digital twinning can take you?

For over 20 years, Bluefruit Software has worked closely with its clients to deliver high-quality embedded software and firmware solutions across a range of sectors. Whether you’re looking to add AI, build out digital twinning or want to find alternative sensing technologies, our team can help.

Case studies and insights

Did you know that we have a monthly newsletter?

If you’d like insights into software development, Lean-Agile practices, advances in technology and more to your inbox once a month—sign up today!

Find out more